As of early 2023, service providers have a broad installed base of over 13 million ports of 10G for 4G/5G and fixed-access networks that could benefit significantly from a network upgrade to 100G. Historically, service providers have taken a cautious approach towards upgrades as price and power considerations have kept many parts of the network at 10G, despite the significant increases in bandwidth downstream with both consumer and enterprise buildouts.

There is a substantial upgrade opportunity as next-generation 100G DSPs come to market. New 100G DSPs should be able to improve energy efficiency, while providing robust long-haul connections in the 80+ km range. Traditional Dense Wavelength Division Multiplexing (DWDM) technologies or first-generation ZR/ZR+ 100G modules serve these longer distances today but are very power-hungry and approaching full saturation. With the price of new 100G DSPs entering the market expected to offer significant cost savings compared to previous technologies, we anticipate that the service providers will want to upgrade and additional new market opportunities will open in service providers, cloud, and enterprise edge networks.

As customer bandwidth continues to explode and grow the need for higher-capacity links continues to increase. Customers expect a fast, robust connection, whether home on their broadband link, roaming at a coffee shop, or working in an office campus.

We anticipate many service providers will look at 100G Coherent as the next speed to standardize their networks with while some may upgrade existing links to higher speeds. In addition, because of the compelling cost, these service providers will start adding new edge connectivity that was not cost-effective or practical with older 10G technologies.

The 100G Coherent opportunity will hit several large markets. We see traditional wireless opportunities in 5G base station backhaul and newer fixed wireless access deployments. Additionally, broadband providers will benefit from 100G Coherent technology for both consumer and enterprise-facing connectivity.

Anticipate a variety of other networks opportunities with 100G. For example, enterprises may deploy 100G data center interconnects with ZR/ZR+ for more extensive facilities as an alternative to relying on the cloud, colocation, or centralized data centers, as bandwidth is no longer limited to location. Another potential is additional rural broadband buildouts as new cost structures allow service providers to reach smaller sites profitable.

We expect vendors to introduce DSPs, transceivers, and networking equipment designed to address the network upgrade opportunity throughout 2023, with modest shipments in 2023 and a more significant ramp in 2024 after operators go through their qualification cycle.

By Alan Weckel, Founder and Technology Analyst at 650 Group

200 Gbps Active Electrical Cable (AEC) to Play Key Role in Current and Next Generation Server Connectivity

Server bandwidth continues to explode. Led by a confluence of next-generation processors, lower process geometry, better software, and the push toward AI, the market is seeing a rapid transition to 50 Gbps, 100 Gbps, and even 200 Gbps ports on each server. AI workloads usher in enormous data sets, and additional accelerators in the server (Smart NICs, DPUs, FPGAs, etc.) continue to push AI traffic bandwidth to outpace the overall network growth of the past five years. Our workload projection has AI-based workloads driving nearly 100% bandwidth growth on the server through 2025 compared to 30-50% in traditional workloads.

With such large amounts of data moving into the server, reliability and power become more important to operators. Power budgets are not increasing this fast, and DAC can only achieve so much speed. AECs proved themselves at multiple cloud providers and hyperscalers earlier in 2021. Early 2022 designs indicated additional design wins with AEC. AECs have high reliability, are on par with DACs, and are far higher than traditional optics. At the same time, AECs have lower power consumption than fiber and can stay comfortably in most operators' power budgets.

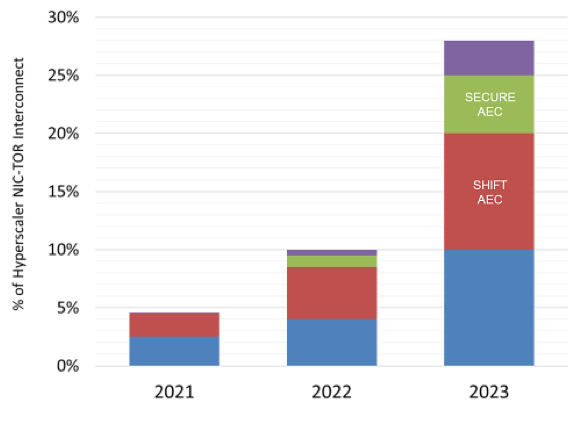

Additionally, AECs are specializing. For example Credo’s Y-Split SWITCH AEC is designed to support failover, the CLOS AEC supports front-panel connectivity in distributed disaggregated chassises, and the SHIFT AEC has the ability to shift between different SERDES lanes (56G PAM4 and 28G NRZ), which allows operators to qualify fewer variations of the cable, future-proofing some connections.

As we look on the OCP Summit floor today, virtually and in person, we see many demos of 200 Gbps. In particular, we spotted implementations at two locations using 200G SWITCH AECs, which Credo announced earlier today. One was with NVIDIA and Wiwynn, and the other with Arrcus and UfiSpace.

The AEC cable will play a more significant role in server connectivity in 2022 and in the overall 56G SERDES connectivity generation to enable new and more powerful applications (AI/ML and existing cloud workloads) to take advantage of higher-speed links.

Traditional enterprise data centers have an Achilles heel – the single TOR per rack architecture. If that TOR fails, it takes the entire rack offline, and as Microsoft recently published, this happens at least 2% of the time in the first 40 days of operation.

In the enterprise, this has traditionally been solved with dual-TOR / dual-port NIC MLAG architecture enabling each server to connect to two TORs and the complexity of this connection to be managed by a combination of TOR MLAG software and the hypervisor running on the server. The problem with this approach is speed. NIC speeds are growing from 10G to 100G and beyond. As speed increases, more x86 cores are dedicated to enabling the hypervisor’s management of this configuration. Today, this is about one core for every 2.5Gbps. However, this is not a sustainable path from a power or cost standpoint, especially in hyperscale infrastructure.

Hyperscalers have countered this problem by deploying redundancy at the application layer and running redundant server racks to support a TOR failure. Redundancy adds cost and complexity to the Hyperscaler’s operations by taking expensive servers off the revenue path and having them idle, waiting for a TOR failure.

Enter the Active Electrical Cable (AEC). In their latest configuration, AECs, which have already enabled the implementation of Distributed Disaggregated Chassis (DDC) deployments, are now ready to enable a new deployment of NIC to ToR implementations.

Credo’s HiWire SWITCH AEC eliminates the server management of a dual-TOR configuration and instead presents the server with only a single port. The Network Operating System (NOS), such as SONiC, can manage a MUX located inside the SWITCH AEC. This MUX can switch traffic from one TOR to another in an Active/Standby mode in less than a millisecond. The result is dual TOR reliability with dramatically improved failover performance as compared to MLAG implementations, but without requiring the server to do anything – or frankly even be aware of the dual-TOR architecture.

Before 2021, essentially 100% of NIC-TOR applications used passive DAC cables. We expect the TOR to server connection to evolve to address redundancy and other needs in the changing demands placed on the network. The market is upgrading to higher speeds and server applications are growing in complexity and scale, as shown below.

This topic will be discussed in a pair of livestreaming events hosted by 650 Group with Credo and Microsoft.

Learn more about the events and register for the English or Mandarin session at:

Wednesday, July 21, 2021 @ 10:00AM PT

REGISTER FOR ENGLISH BROADCAST

Thursday, July 22, 2021 @ 9:00AM Shanghai

REGISTER FOR MANDARIN BROADCAST

Every day, consumers put more data in the Cloud and enterprises increase their utilization of Cloud services to conduct business. The Cloud and the digital content it holds continue to make up an increasing portion of the world's economy - even more so with COVID-19 causing a rapid shift and acceleration in digital transformation projects in companies.

To keep up, modernizations that previously took years to deploy are being pushed through quickly because of COVID-19. 2020 is now the year where it is truly Cloud-first, whether that be consumers using Cloud services more for personal activities ranging from interacting with loved ones via Social Media websites to e-learning or the rapid shift to work-from-home (WFH).

With enterprises relying on Cloud for daily operations, the importance of end-to-end security is increasing every day. In the Ethernet Switch (L2) and Routing market (L3/L3+), the interest in MACsec increases with each speed transition. There is a higher attach rate of MACsec with 400 Gbps products compared to 100 Gbps, and we expect with the data center rapidly moving towards 100G per Lambda and 112 Gbps SerDes that MACsec will play a pivotal and significant role in the 800 Gbps market.

End-to-end encryption from the server, often via a SMartNIC, is becoming more common. In the case where a packet crosses between two locations, MACsec encryption secures user/enterprise data from the moment it leaves a Cloud’s data center to the moment it enters. As applications use edge computing resources and become distributed across multiple availability zones and countries, data sovereignty and security become more important and top-of-mind for data center architects.

As Cloud providers and Telco Service Providers adopt 400 Gbps and look toward 800 Gbps, we expect to see more purpose-built MACsec solutions. The data center networking market is also transitioning away from Modular chassis, and toward more Fixed CLOS architectures, we expect more Fixed 1RU solution with MACsec, especially in the DCI layer. DCI will be a new market for Ethernet platforms, and vendors will look towards new features beyond the ASIC, like MACsec, to compete in this space.

Datacenter architectures are constantly evolving as compute, storage, and networking innovations lead to constant evolution and improvement. COVID-19 is accelerating many trends in technology. Digital transformation projects that would take years or be in industries that are slow to deploy are going through years’ worth of innovation in a matter of months.

K-12 online learning is a great example of a rapid shift to Cloud-based tools requiring a massive bandwidth explosion. In online learning, this step function in bandwidth demand will likely change the education vertical permanently, even when classrooms begin to refill. The paradigm shift of where users reside and how they access data will persist, with 5G magnifiying the need for network changes to keep pace as available bandwidth increased. Unlicensed spectrum complements 5G as additional spectrum availability increases total available bandwidth and will allow for all sorts of new IoT devices with additional information processing in the Cloud for each IoT device.

For networking, three changes are putting pressure on networks and driving design. First, servers are getting more efficient and driving an increasing amount of traffic onto the network. More efficient servers change the architectural relationship between the Top-of-Rack (TOR) and aggregation/spine layers of the network. At toppehe very least, cloud providers need additional aggregation tiers.

Second, the top of the network needs additional tiers of networking. As data centers move from single-building to multi-building deployments, additional tiers are needed to connect buildings in both the same facility and between regions. At a minimum, Data Center Interconnect (DCI) introduces two new tiers of datacenter networking.

Third, high-speed ASICs, 12.8 Tbps and higher, offer advantages in fixed form factor boxes compared to modular chassis. The building blocks shift towards 1RU, and 2RU pizza boxes switches with copper interconnects vs. the backplane of a chassis. Single racks can approach and are about to exceed 100 Tbps capacity in a non-blocking way.

Hyperscalers use of DDC is increasing rapidly, and use cases are emerging beyond the cloud. Given the additional networking tiers, the cloud is testing and getting ready to deploy DDC elements in the first modular aggregation tier, utilizing L2 ASICs and is exploring the use of Fixed boxes in the L3+/routing space. DDC, or fixed CLOS architectures, have been around for a while and are proven. Advances in cabling and high-speed ASICs are helping push for more widespread adoption.

Demand doesn’t stop there. The three largest western Router vendors have all announced 1RU platforms to support the trend of Fixed topologies. To date, there are over ten unique ASICs, and nearly two dozen system companies with product offerings well-suited to this change of the architecture - in a way, a record number of platforms.

Beyond hypserscalers, traditional service providers see DDC as an architecture that will increase agility and create a more cloud-like architecture in their networks. Peering sights, 5G networks, and traditional bandwidth expansion can all potentially benefit from moving away from Modular chassis and towards Fixed architectures.

By Alan Weckel, @AlanWeckel, Founder and Technology Analyst at 650 Group, @650Group

Cloud providers are rapidly evolving their network topology architectures as they move towards 400 Gbps and beyond. One trend resonating across the industry is the move towards CLOS switch rack or Distributed Disaggregated Chassis (DDC) topologies and the use of copper above the server access layer. However, the distances DAC can serve continue to shrink with each increase in speed, and fiber remains costly.

DDC will ramp in 2H20. Both Service Provider and Data Center networks will take advantage of the power density provided by 25.6Tbps switch silicon to deploy dense in-rack CLOS architectures. Active Electrical Cables (AEC) such as HiWire™ AEC are a key enabling technology for DDC architectures.

In many ways, DDC CLOS architectures use copper cables as a replacement for the traditional modular chassis backplane. As we look towards this architecture change, we see three unique form factors of cable emerging to replace DAC and AOC.

Active Optical Cable (AOC) Replacement

Products like Credo HiWire™ SPAN AEC will begin to replace AOC. A fully populated rack of AOC can often have the same power as the switches themselves, and the current supply chain does not have consistent high-volume availability. AOC also has a high relative cost of a fiber solution. This type of copper solution will have longer distances and will potentially move into use cases around the middle of row connectivity.

Gear Shifting Splitter Cables

Products like Credo HiWire™ SHIFT AEC will gearbox between SERDES speeds. While today, the most common option is splitting a 56 Gbps SERDES into two 25 Gbps ports, we expect this type of cable to become more popular when 112 Gbps SERDES begin to ship. For example, a purpose-built 48-port 100 Gbps switch with 112 Gbps SERDES could become multipurpose and split down to 25/50 Gbps ports for server access or switch-to-switch connectivity.

Low Cost, Short-Reach Cables

Products like Credo HiWire™ CLOS AEC will begin to enter the market for short distances within the rack. Today DAC comes in one type of solution; however, with DDCs becoming more popular, a new type of purpose-built and short-reach cable should emerge to connect switches within a rack. By purpose-building for this use case, the cable should be cheaper, thinner and lower power, which is attractive when trying to pack so many cables into a single rack.

We expect that newer copper technologies will also benefit from improved process geometries over the next 12-18 months. Moving from 28nm to 12nm and below will help drive down cost in the interconnect part of the market in a very similar way of the moving from 28nm to 16nm to 7nm had huge cost savings in the switch ASIC itself.

the middle of November, Credo joined the Open Compute Project (OCP) as a Community Member. This is a big deal, as OCP has become one of the largest and most influential organizations and shows for driving high-speed network connectivity in the Cloud.

The Cloud is rapidly adopting higher-speed networking to adapt to faster compute and new workloads such as ML (machine learning) and AI (artificial intelligence). The amount of server platforms which members of OCP are designing and implementing continues to grow each year. There are now robust designs ranging from X86 to ARM to handle a wide variety of workloads.

Robust server designs need high-speed connectivity and a move towards disaggregated and flexible network topologies. 12.8 Tbps and 400 Gbps began introducing some of these designs with chiplet based ASICs and high-density 100 Gbps solutions beyond a typical 1RU platform. The market will begin to see 25.6 Tbps switch platforms in 2020 that will drive yet another set of innovations at the product level to keep up with the bandwidth requirements hyperscalers need and OCP has become one of the key places where the industry gets together to make these developments and showcase new products.

As ASIC speeds increase, the connections between the server, switch and at the core is rapidly changing as well. Customers are pushing the limits of traditional DAC cables and looking towards new copper technologies, such as Active Electrical Cables (AECs), to keep costs and power down for server access. At the same time, higher speed silicon is causing the aggregation and the core portion of the network to evolve. Converging the top tiers together into racks with short reach has the potential to reduce the number of fiber ports necessary and allow this traditionally fiber-only part of the network to benefit from copper pricing.

With 800 Gbps and 112 Gbps SERDES around the corner, it will be exciting to see what the members of OCP show off at their conference in 2020 to help drive higher-speed connectivity in the data center. At the same time, some of these bleeding edge technologies will begin to form the building blocks of enterprise and service provider networks over the next few years, which we believe will be an important contributor to the switching market during the outer years of our forecast.

While Cloud Providers have always been willing to rethink their networks outside industry consensus (starting disaggregation, implementing Whitebox, 25 Gbps, DAC, splitter cables, fixed aggregation, and core boxes, etc.) there has been one part of the network that has remained consistent. Cloud providers continue to use fiber from the top-of-rack switch all the way to the core with the common reasoning that distance and reliability in those tiers dictate fiber. Part of this rationale is the fact that the aggregation part of the network is usually dispersed in a data center and not centrally located.

Active Ethernet Cables (AEC) such as HiWire™ AEC, which has already gained the support of a consortium of 25 industry leaders in connectivity and datacenter technology, opens the door to changing this part of the architecture. 400 Gbps optics lag by almost a year for the availability of 400 Gbps switches and the delay in optics is driving an increasing amount of traffic and tiers into existing 100 Gbps switches. AEC is similar in quality and reliability to fiber, but in a smaller diameter copper form factor and cost.

Cloud Providers of all sizes, enterprises, and Telco service providers can all benefit from rethinking the aggregation layers of their network, especially now when optics are still months away. Centralizing the aggregation switches to one part of the data center, using AEC and 400 Gbps to connect them, can allow for a reduction in the number of switches and the number of tiers in a data center. This can lead to significant savings in both OPEX and CAPEX. Customers worried about the blast radius can have multiple aggregation racks.

CAPEX savings come from reducing the number of switches needed and the number of optics. OPEX savings come from reducing the number of ports and given the need for power savings; any little bit can help.

Customers today can get immediate cost savings by switching future builds to this new type of topology and decrease the use of 400G optics, which will be expensive and have limited availability. Another advantage is that a customer can use the available optics only when needed, thus allowing a broader adoption of 400G and 12.8 Tbps.

A single 12.8 Tbps switch can replace 6 or more 3.2Tb switches as the higher radix requires less inter-switch links between low-radix 3.2 Tbps switches at the same tier. The 6:1 compression ratio is compelling, especially when power is taken into account comparing one 12.8 Tbps to six fully loaded 3.2 Tbps switches. AEC helps bridge the gap until 400G optics are available, is a good and alternative option even when the 400G optics become available, and with a roadmap to 800G, will prove to be a long term copper alternative to optics in the data center.

Next-generation Interconnect Technology Maintains Cost and Power Benefits of Copper at Higher Speeds

DAC has always had a tenuous relationship in the data center. Customers love the low cost, but it has always been the least reliable option and limited on distance. Quality manufacturing can have a big impact on the actual distance a cable can support. Reliability is one of the reasons why enterprises love 10G-Base-T so much compared to using splitter cables as hyperscalers do.

Today, 25 companies founded the HiWire™ Consortium to help accelerate and drive the industry migration towards 400 Gbps and address the need for high-speed server access with copper. Some of the largest suppliers of networking to the Cloud joined together to fill a gap in data center networking. Today the distances that DAC can support reliably continue to shrink as servers move towards higher speeds, and the size of the DAC cable continues to get larger. In some fully loaded scenarios, the cables on a switch can take up more faceplate size making for complicated Top-of-Rack installation and blocking airflow.

HiWire Active Electrical Cables (AEC) helps push out the life of copper for server access technology. AECs are a new type of copper cable that competes against DAC and Active Optical Cables (AOCs). With integrated gearbox, retimer and FEC functionality, AEC also allows for speed shifting, PAM4 to NRZ mode conversion and high integrity, lossless connectivity with in the cable which can enable the industry to look at more efficient network topologies. For example, the cable can covert from 400G PAM4 to 4X100G NRZ or a 100G SFP DD port can be split to 50 Gbps ports on the server NIC, the later is especially interesting with new 7.2-8.0 Tbps switches coming to market with SFP DD ports.

The HiWire Consortium is dedicated to providing data center ethernet customers something that consumers already enjoy – plug and play functionality. In the consumer world, if you pick up a cable with a USB-C mark or an HDMI mark, it just works – no evaluation, no tinkering. The USB community accomplishes this through two groups – the USB Promoters Group that does the technical heavy lift of developing electrical and mechanical standards and the USB Implementers Forum (USB-IF) which manages a 3rd party test infrastructure and licenses the USB mark to those cables that meet the requirement.

In the Ethernet work, the work in IEEE and the many MSAs are analogous to the USB Promoters Group, but we have nothing like the USB-IF – this is the gap the HiWire Consortium has been developed to fill. To assemble the building blocks from IEEE and the many MSAs into a specific set implementations that meet user needs; then to enable a 3rd party to test AECs to this specification and license a trusted mark. The goal is to push much of the qualification burden of integrators, OEMs and ODMs upstream to AEC manufacturers and ensure a consistent, high quality plug & play product experience.

HiWire is interesting for the market as it can be used today in several use cases ahead of 400G optics availability. It can provide a path forward, with similar capabilities to 800 Gbps and the 25.6 Tbps switches which are about to come out to market. Cloud Providers have the opportunity to remain with the copper technologies and splitter cables used for nearly a decade and not having to move to fiber also helps the industry concentrate on Onboard optics and silicon photonics, without the need of an interim technology, because DAC distances shrink too much as servers become more efficient.

Given the rapid adoption of new servers for Artificial Intelligence (AI) and Machine Learning (ML) as well as the use of PCI Gen 4 and delay in 400 Gbps optics availability, we expect a lot of interest in AEC cables.

Another Important Workload Moves Towards the Cloud Pushing for Higher Speed Networking

Google recently announced its gaming Cloud service, Stadia. Stadia is the start of an important trend of moving rendering of games and other video content to the Cloud and away from devices. As the technology evolves, the Cloud will be capable of 8K video games that are seamless to most users.

To help reduce latency and insure a premium gaming experience, Google’s approach includes about 7,500 edge nodes and a specialized controller which talks directly to Google’s Cloud. Stadia represents be a dramatic and significant shift in gaming. One that will allow casual gamers to play on familiar hardware devices without having to buy a new dedicated gaming system. It will also allow games to be updated without a user downloading patches, etc. This will potentially open the market to additional game developers, and it could also affect the fundamental business structure of the industry by moving it towards a subscription model. A Cloud approach will also let developers roll out or try different versions of games regionally. This could trigger complementary, highly targeted advertising revenue opportunities. Imagine, for example, a pizza shop in the game being rendered to a local business for advertising purposes.

Bringing this back to networking, the move towards Cloud rendered gaming is another new use case that will put additional networking demand and ports into the Cloud. The bandwidth and GPU intensity will only increase as developers and Google learn, grow and optimize the platform. The Cloud will continue to move rapidly towards higher speed technologies. This is a prime use case of 400 Gbps and why 800 Gbps is so important and needs to follow quickly. The networking industry will not only enjoy an increase in demand for overall bandwidth but will also benefit from the secondary high-speed network which will exist to connect these gaming clusters together internally.

Cloud rendered gaming will create incremental new high-speed TAM for networking suppliers. It is only one of several new use cases that Cloud companies can engage as their infrastructure becomes more robust and ubiquitous. In a way, one that drives both core and edge computing.

Credo is committed to leading the networking industry’s transition to higher speed by being first to market with next generation SerDes technologies. Credo’s 112Gbps SerDes are being deployed in a variety of forms including IP, chiplets, line card components, optical components, and Active Ethernet cables. The Credo technology supports nearly every connection made within a Data Center and provides the foundation to move to the 800G performance node.

Will Enable Second Generation 400 Gbps Capable of Longer Distances

In late 2018, Barefoot networks publicly announced the first ever 7nm plus chiplet switch ASIC product – the Tofino 2 chip. This is the start of a market transformation as Ethernet Switch design will begin to embrace disaggregation (such as the chiplet type design) much like the rest of data center market. We will see strong product demonstrations at OFC, OCP, and numerous other shows throughout 2019 as the ecosystem gears up for this important architecture shift. With 7nm available from major foundries, the market will begin to move in this heterogenous direction as a way to higher capacity switch silicon. Not only will this pave the way for 25.6 Tbps and 51.2 Tbps fabrics, we will see increased product agility, lower cost, better power, and more products offerings from chiplet design.

It is important to note that switch ASICs have had both the analog and logic designs put on the same semiconductor chip for over a decade, which forced designers to shrink the analog portion of the design to the same semiconductor process geometry as the logic design. However, since the analog part of chip design is fundamentally different from the logic design, it moves to a different design time-table than the logic design. Chiplets have a huge advantage as the analog part of the design does not have to shrink at the same pace as the logic component. This disaggregation of technology allows for older and proven analog chip components to be packaged along with cutting edge process geometry-based logic chips. As we saw in Tofino 2 chip, the analog component is provided by a different vendor and is on a 28nm or 16nm process geometry, while the Barefoot logic is in 7nm.

Second generation 12.8 Tbps fabrics (defined as 7nm and chiplet architectures vs. 16nm single chip solutions) will also enable Ethernet Switches to take on metro deployments that today are primarily served by stand-alone optical transport gear. This will significantly increase the addressable market for Ethernet Switch products and vendors, something that is generally a good thing for the Ethernet ecosystem, customers, and industry.

The speed of product innovation in 2019 will be fast-paced. With 56 Gbps SERDES chips just now starting to ship, we will see many next generation 112 Gbps SERDES announcements in 2019. This, in turn, will help set up 2020 to be a transition year to the shipment of higher speeds, just in time to meet the demand of new high-bandwidth workloads such as Artificial Intelligence (AI) and video game streaming. AI will continue to see massive investment dollars in 2019 and beyond, increasing demands on the network, while game streaming will come to life as Microsoft and Sony deliver Their next consoles. These new workloads will significantly impact the network and will cause a change in network evolution, data center speeds and network programmability.

One of the first use cases for 400 Gbps will be in the aggregation/core part of the network. Cloud providers will look at 400 Gbps and above for connecting their data center properties together. This will cause Data Center Interconnect (DCI) to become a larger part of Cloud CAPEX in 2019 and 2020.

With increased demand for global network bandwidth, the hyper-scale cloud providers are in a position to consume the next generation of higher capacity, 100G SerDes enabled switches as soon as they can get their hands on them. Cloud providers are already operating at extremely high rates of utilization and would welcome a higher performance, intelligent and more efficient intrastructure boost. The key technology building blocks are in place to prototype 25.6Tbps switch based networks in 2H2019 and ramp in 2020. This is a year or two ahead of popular thought.

Today’s ramp of 100G per lambda optics which is enabling 50G SerDes based 12.8Tbps switches in the form DR4/FR4 optical modules are laying the groundwork for a rapid transition to 25.6Tbps switches based on 100G SerDes technology. To understand the rapid tranisional possibility it is important to look back at optics transistions from 10G to 100G. The move to 25G SerDes enabled switches required the optics to move from 10G to 25G per lambda. This transition was challenging and caused a two year delay in ramping 3.2Tbps switch enabled networks. The next big move to 100G per lambda for the ramp of 12.8Tbps, 1RU switches requires a doubling in baud rate and modulation (PAM2 to PAM4). As a result, optics again are the delay to mass deployment of the latest generation of 12.8Tbps switches “BUT” this strategic, aggressive move to 100G per lambda as a mainstream technology in 2019 creates the unique inflection point of “optics” leading the next generation switch silicon for the first time since god knows when. Moving from in-module 50G to 100G gearboxes to 100G to 100G retimers to match the switch single-lane rate is generally recognized as straight forward.

Moving to 100G end-to-end connectivity. As discussed, the fundamental 100G per lambda optical PMDs are in place. In parallel, Credo has been publicly demonstrating low power, high performance 100G single-lane electrical SerDes manufactured in mature 16nm technology since December 2017. We as an industry simply need to agree on some common sense items such as VSR/C2M reach and 800G optical module specifications and execute on a few strategic silicon tape-outs in the 1H2019 to bring the 25.6Tbps into the light.

In my next Blog I will layout the foundational silicon steps to make 100G single-lane, end-to-end connectivity a mainstream reality in 2020. Stay tuned…